Founded in 2003 and directed by Professor Anita Raja, the Distributed Artificial Intelligence Research (DAIR) Lab focuses on the design and development of intelligent single and multi-agent systems. Lab members pursue research in distributed computing, convention formation, cascading risks, clinical informatics, computational monitoring and control, complex networks, machine learning, resource-bounded reasoning, and reasoning under uncertainty.

As embedded systems with collaborating agents become increasingly pervasive, these agents must adapt to dynamic, open environments. Effective adaptation requires prioritizing tasks, assessing resource availability, and identifying alternative strategies to meet current and future goals. A unifying theme in DAIR Lab research is the development of strategies that enable agents not only to adapt intelligently but also to reason about when and how much to adapt.

This interdisciplinary work includes collaborations with researchers in biology, cognitive science, cybersecurity, electrical engineering, genetics, intelligence analysis, medicine, software engineering, political science, urban planning, and visual analytics.

1. Advanced Machine Learning for Adverse Pregnancy Outcomes

The multifactorial complexity of clinical data complicates prediction and prevention of undesired outcomes. This project aims to investigate the value of more advanced machine learning methods by simultaneously considering all the factors, to develop better predictive and prevention methods.

Our approach to preterm birth prevention brings to bear stochastic methods to derive accurate, multidimensional prevention models from large collections of observational data. These methods will support prevention relative to different stages of pregnancy.

- Decision Making in Partially Observable Environments: Design, develop and evaluate stochastic transition probability function, cost effectiveness analysis and sensitivity analysis to support decision making in uncertain environments. We are studying this in the context of prevention of undesired outcomes in clinical informatics

Collaborators: Prof. Ansaf Salleb-Aouissi, Columbia University; Prof. Itsik Pe’er, Columbia University, Dr. Ron Wapner, Columbia University Medical Center.

Recent Publications:

Y.C. Lin, D. Mallia, A. O. Clark-Sevilla, et al. A comprehensive and bias-free machine learning approach for risk prediction of preeclampsia with severe features in a nulliparous study cohort. BMC Pregnancy Childbirth 24, 853 (2024). https://doi.org/10.1186/s12884-024-06988-w

R.R. Khan, R.F. Guerrero, R.F., R.J. Wapner, M. W. Hahn, A. Raja, A. Salleb‑Aouissi, W.A. Grobman, H. Simhan, R. M. Silver, J. H. Chung, U. M. Reddy, P. Radivojac, I. Pe’er and D.M. Haas, “Genetic polymorphisms associated with adverse pregnancy outcomes in nulliparas.” Scientific Reports 14, 10514 (2024).

A.O. Clark-Sevilla, Y.C Lin, A. Saxena, Q. Yan, R. Wapner, A. Raja, I. Pe’er, A. Salleb-Aouissi, “Diving into CDC pregnancy data in the United States: longitudinal study and interactive application“. JAMIA Open. ;7(1):ooae024. doi: 10.1093/jamiaopen/ooae024, Mar, 2024.

Project page hosted in Prof. Salleb-Aouissi’s PRAISE lab

Students: Alisa Leshchenko, Mahdi Loodaricheh

Alums:Anton Goretsky, Adam Catto, Daniel Mallia

Funding Sponsors:

NIH 5R01 : https://projectreporter.nih.gov/project_info_details.cfm?aid=9928205&map=y

NIH 3R01: https://projectreporter.nih.gov/project_info_details.cfm?aid=10148467&icde=0

NSF Eager Link: http://www.nsf.gov/awardsearch/showAward?AWD_ID=1454814

2. Multiagent Meta-level Control for the Future of Transportation Systems

As cities across the globe continue to grow, traffic congestion has become globally ubiquitous with great economic and environmental costs associated with it. The increasing prevalence of self-driving veh icles creates an opportunity to build smart, responsive traffic infrastructure of the future. Such an infrastructure consisting of connected and autonomous vehicles and smart traffic lights would have the potential to cope with congestion, weather phenomena and accidents, while maintaining safety and ensuring privacy of information. We argue that an approach that leverages multiagent meta-level control (MMLC) to address the challenge of dynamically adjusting traffic to the changes in the environment leads to improvement in travel times as well as decrease in emissions in mixed traffic simulation environments. We develop distributed algorithms to enable connected vehicles to overcome the traffic congestion problem efficiently and cost-effectively. [More Info]

icles creates an opportunity to build smart, responsive traffic infrastructure of the future. Such an infrastructure consisting of connected and autonomous vehicles and smart traffic lights would have the potential to cope with congestion, weather phenomena and accidents, while maintaining safety and ensuring privacy of information. We argue that an approach that leverages multiagent meta-level control (MMLC) to address the challenge of dynamically adjusting traffic to the changes in the environment leads to improvement in travel times as well as decrease in emissions in mixed traffic simulation environments. We develop distributed algorithms to enable connected vehicles to overcome the traffic congestion problem efficiently and cost-effectively. [More Info]

Some related previous works:

- Emergence of Social Norms and Conventions in Multiagent Systems: In this project, we study the importance and challenges of establishing cooperation among self-interested agents in multiagent systems (MAS). The hypothesis of this work is that equipping agents in networked MAS with “network thinking” capabilities and using this contextual knowledge to form social norms in an effective and efficient manner improves the performance of the MAS. We investigate the social norm emergence problem in conventional norms (where there is no conflict between individual and collective interests) and essential norms (where agents need to explicitly cooperate to achieve socially-efficient behavior) from a game-theoretic perspective.

- Coordinating Meta-level Control Across Agent Boundaries: The fundamental question addressed in this work is how to determine and obtain the minimal overlapping context among decentralized decision makers required to make their decisions more consistent. Our approach is a two-phased learning process where agents first learn their policies offline within the context of a simplified environment where it is not necessary to know detailed context information about neighbors. We evaluate our approach by addressing meta-level decisions in a complex multiagent weather tracking domains.

Collaborators: Prof. Ana Bazzan, UFGRS; Prof. Hasan, UNL,

Students: Arezoo Bybordi, Matthew Carr, Eric Li

Alumns: Yaroslava Shynkar, Marin Marinov, Nigel Ferrer

Funding Sponsor: PSC-CUNY Enhanced Grant, 2020-2022

3. Software Engineering for Machine Learning

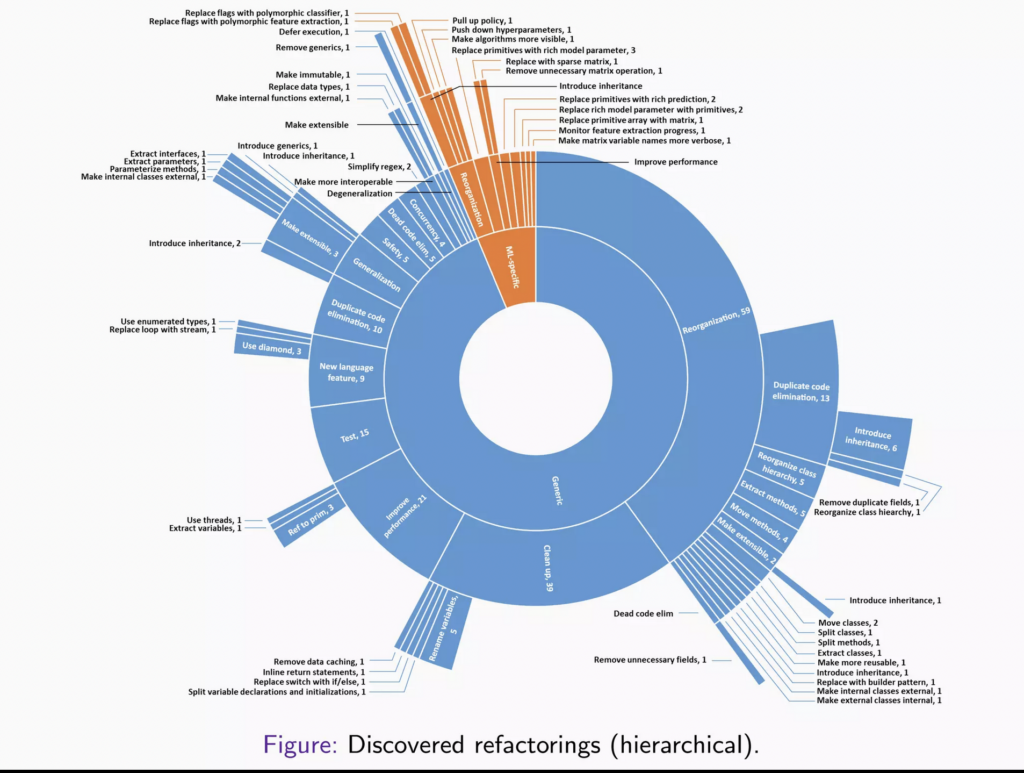

Machine Learning (ML), including Deep Learning (DL), systems, i.e., those with ML capabilities, are pervasive in today’s data-driven society. Such systems are complex; they are comprised of ML models and many subsystems that support learning processes. As with other complex systems, ML systems are prone to classic technical debt issues, especially when such systems are long-lived, but they also exhibit debt specific to these systems. Unfortunately, there is a gap of knowledge in how ML systems actually evolve and are maintained. Our recent work indicates that developers refactor these systems for a variety of reasons, both specific and tangential to ML, some refactorings correspond to established technical debt categories, while others do not, and code duplication is a major cross-cutting theme that particularly involved ML configuration and model code, which was also the most refactored. We also introduce new ML-specific refactorings and technical debt categories, respectively, and put forth several recommendations, best practices, and anti-patterns. The results can potentially assist practitioners, tool developers, and educators in facilitating long-term ML system usefulness. More information can be found here.

Collaborators: Prof. Raffi Khatchadourian

Student: Nan Jia

Funding Sponsors:

NSF: SHF: Small: Practical Analyses and Safe Transformations for Imperative Deep Learning Programs, 2022-2025

Click here for DAIR Lab Past Projects (2003-2019)

Click here for theses and projects supervised (2003-Present)